Site migration and managing 301 redirects are among the most technical tasks in SEO. Classic methods — exact matches, fuzzy matching, and manual checks — have long dominated. Now, semantic analysis using vector embeddings (LLM models such as OpenAI, Gemini, Ollama, or even Llama3) is emerging as the new frontier for mapping old URLs to their best equivalents.

The embeddings approach goes beyond simple text comparison: it captures page intent and meaning, reducing false positives and enabling large-scale automation (for example, migrations involving thousands or millions of URLs).

But beware: this technique should be used to complement fuzzy matching and always with human validation.

What is semantic analysis using vector embeddings?

Thesemantic analysis using vector embeddings involves representing texts (words, sentences, pages…) as numerical vectors in a multidimensional space. Each dimension of this vector encodes a particular aspect of the text's meaning: thus, two texts with similar meaning or intent will have vectors close to each other in that space, even if their words or structure differ.

Modern embedding models (from machine learning and natural language processing, such as Word2Vec, BERT, or LLMs like OpenAI or Gemini) are capable of “understand” the context and deeper meaning of the contentfar beyond a simple keyword match. This is what allows, during an SEO migration, comparing pages that don't necessarily have textual similarity but serve the same role, share the same search intent, or address a similar problem.

In SEO, semantic analysis with vector embeddings is revolutionizing mapping:

- It allows you to identify which pages are “closest” in terms of meaning,

- Facilitates the automatic grouping of similar content,

- And makes reliable automation of large-scale redirection plans possible.

For example, a page about “running shoes” can be matched to a page titled “sneakers for running” because their contents are close in vector space, even if the words differ.

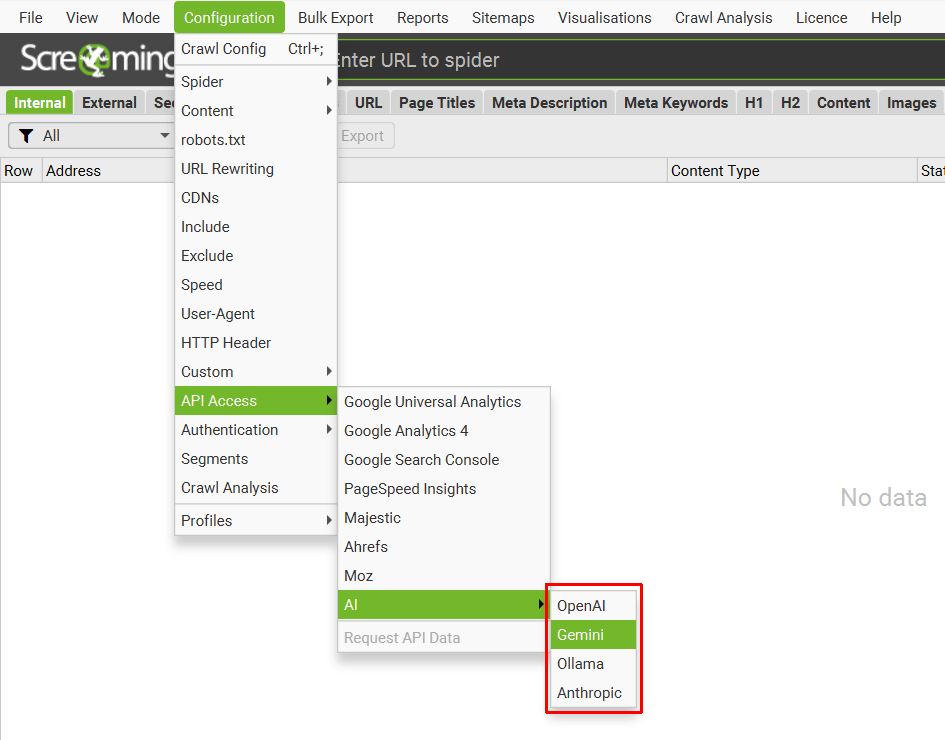

1) Choose an AI provider for embeddings

- Screaming Frog supports OpenAI, Gemini, Ollama…

- Tip: For demanding projects, Ollama/Llama3 delivers relevant and fast results locally.

Be sure to:

- Plan for API quotas (large volumes = significant cost).

- Test models according to the type of content (e‑commerce, editorial, technical).

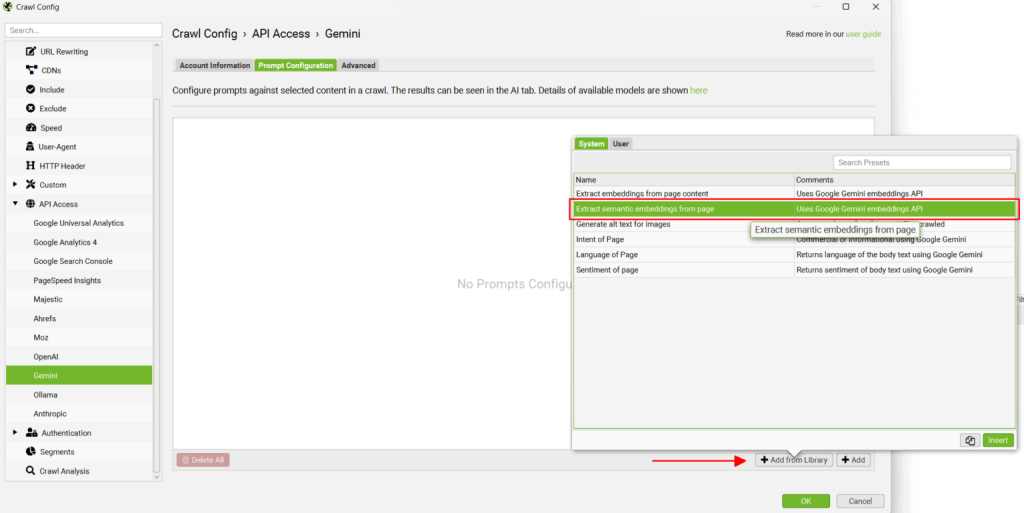

2) Add the embeddings prompt from the library

- Favor a prompt that analyzes the page's main content area (avoid sending the menu, footer…).

- The more precise the analysis area, the higher the quality of the redirection recommendation (if in doubt, customize the configuration).

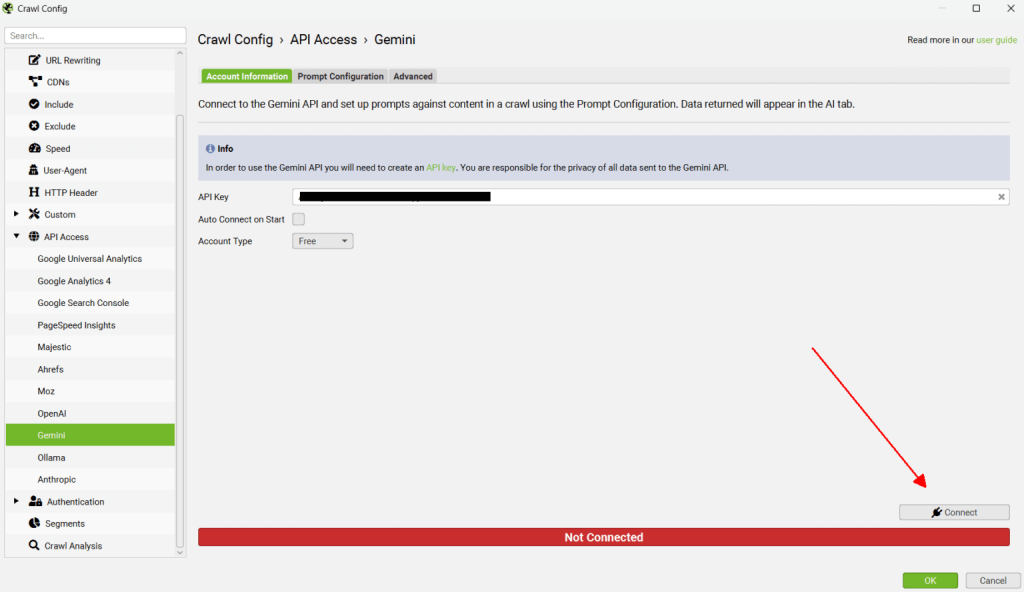

3) Connect Screaming Frog to the API

- Always validate the connection before crawling.

- Tip: for very large sites, batch URLs or crawl at night (avoids overload and throttling).

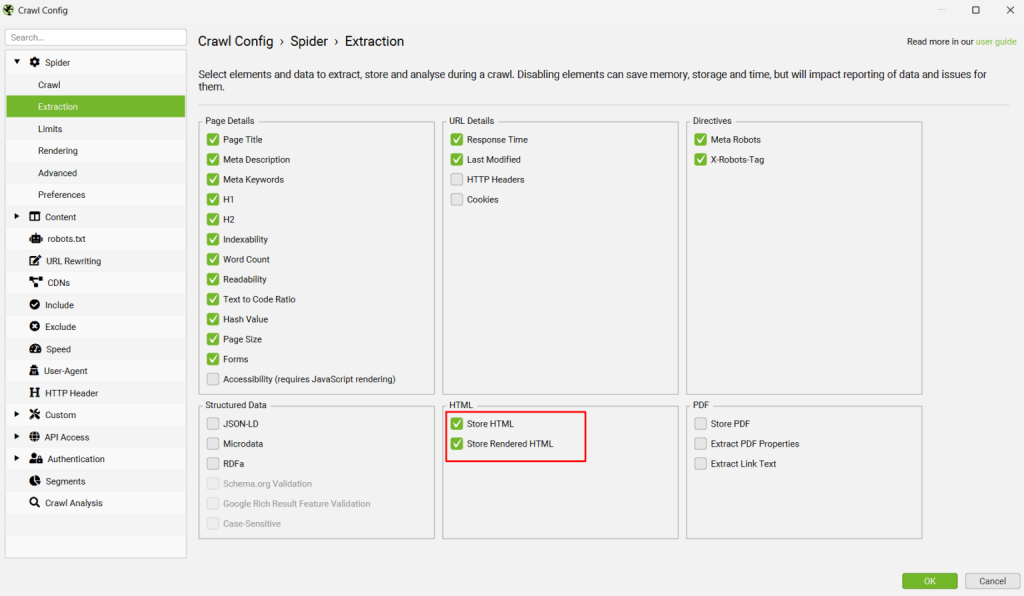

4) Enable "Store HTML" and "Store Rendered HTML"

- Useful for JavaScript sites, SPAs…

- Expert tip : the combination of the two ("raw HTML" and "rendered HTML") allows you to detect differences between "off-page" and "in-page" content.

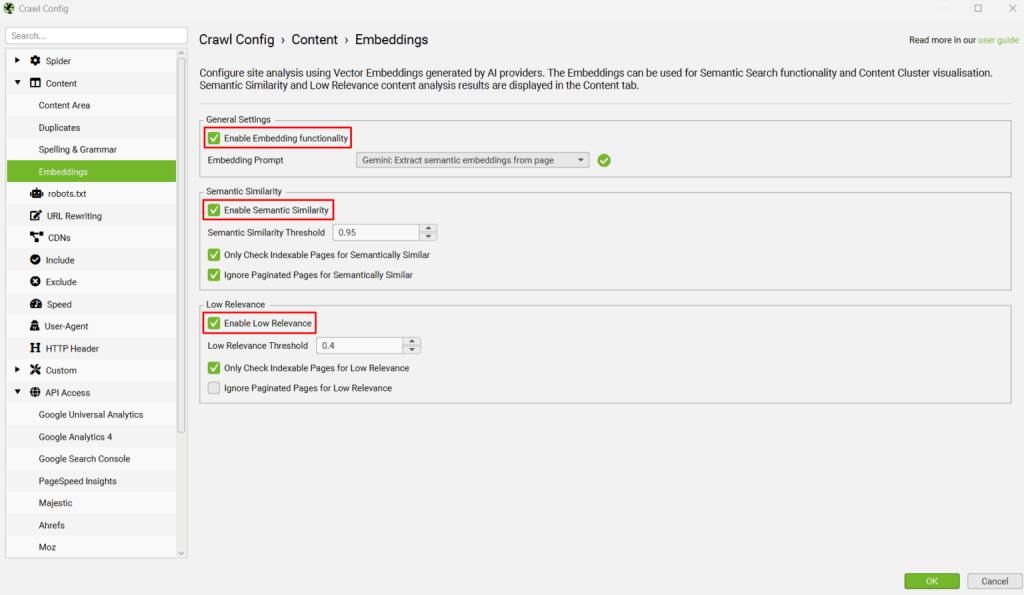

5) Enable embedding features

- In addition to "Semantic Similarity", enabling the "Low Relevance" indicator helps identify isolated or "orphan" content (super useful during migrations to avoid Soft 404s).

- On an international or complex editorial mapping, filter results by language or category using the tool.

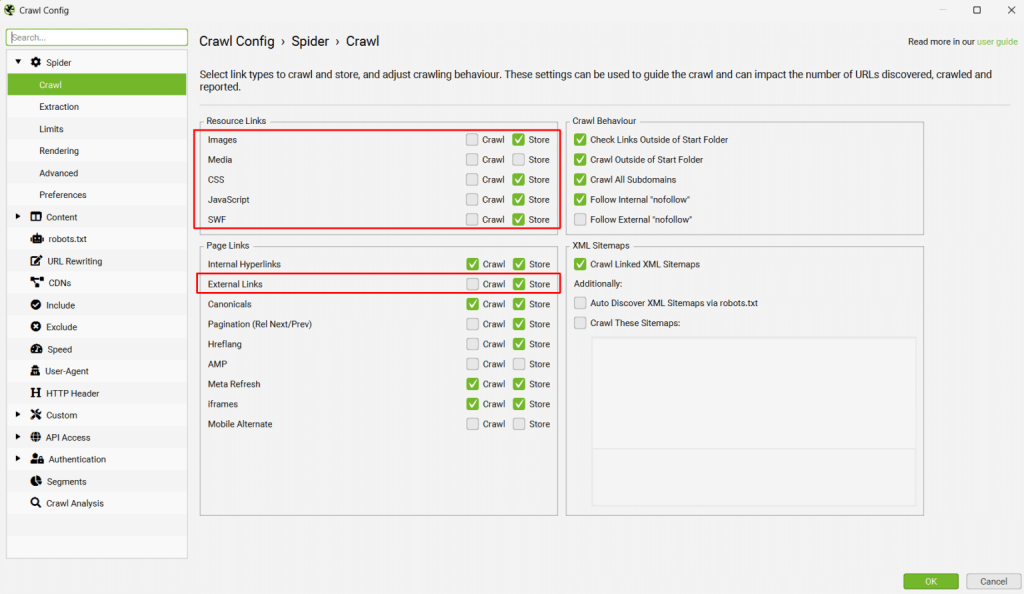

6) Disable crawling of unnecessary resources

- To optimize: disable crawling of images, JS, CSS and external links (save time, reduce the API bill).

7) Crawl old and new sites in parallel

- "List" mode: import lists of old and new URLs.

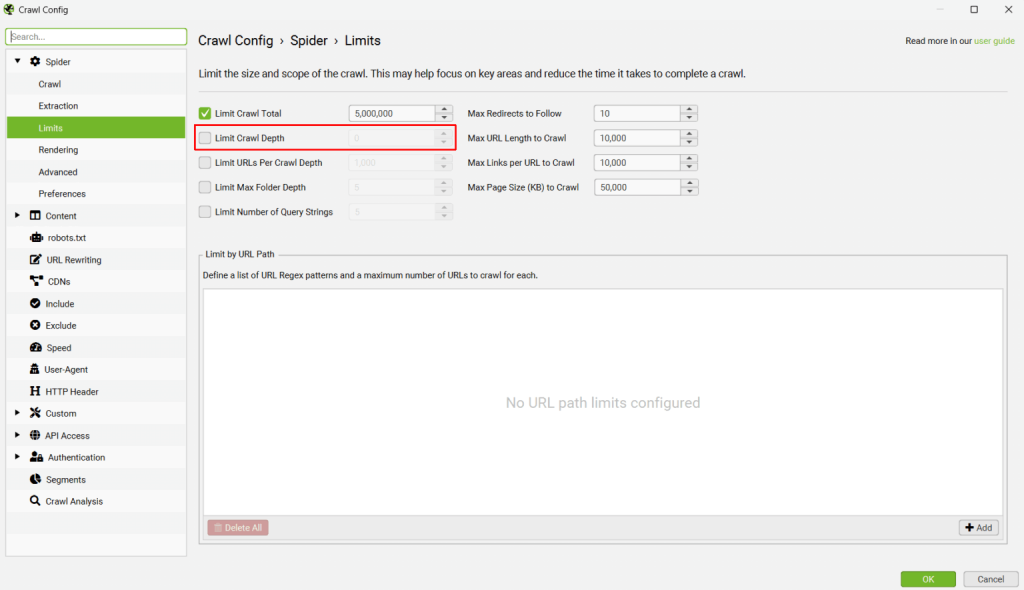

- For large volumes (>10,000 pages), consider splitting into multiple crawls to monitor performance and stability.

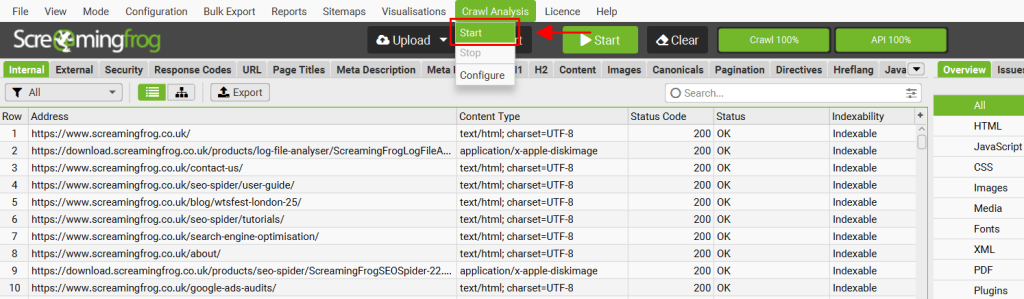

8) Run the crawl analysis

To populate the “Semantically Similar” and “Low Relevance Content” filters in the Content tab, you need to run an analysis of the crawl once the crawl is finished.

- In the menu, click "Crawl Analysis", then "Start".

Note that it is also possible to automatically run the analysis at the end of the crawl by selecting “auto-analyze at end of crawl” in the configuration menu.

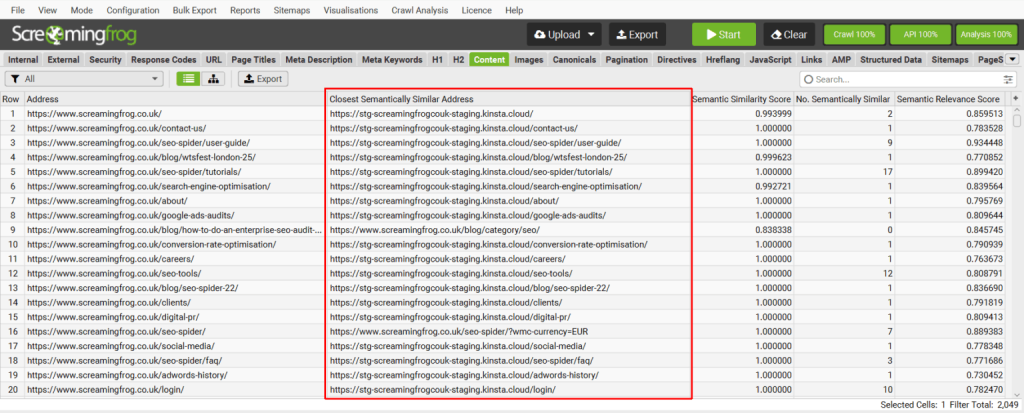

9) View and leverage matches

- Pay particular attention to the "Closest Semantically Similar Address" column: it's the mapping hot spot.

- Sort by "Semantic Similarity Score": > 0.95 = excellent match / between 0.8 and 0.95 = needs auditing / < 0.8 = to monitor or match manually.

- Use the bulk export to create your redirect files (.csv, .xlsx).

A few additional tips

- Combine techniques : Fuzzy matching and embeddings deliver better results together, especially for complex cases (categories, depths, atypical migrations).

- Enrich the data : Add other attributes to embeddings (title, H1, SKU, breadcrumb…) to improve the relevance of matches.

- Filter by year/metadata : If you have “publish_year” or any useful metadata, filter your results to improve freshness and relevance.

- Automate validation : Use Python or Colab scripts to speed up validation (see the notebook shared by iPullRank or Chris Lever's script).

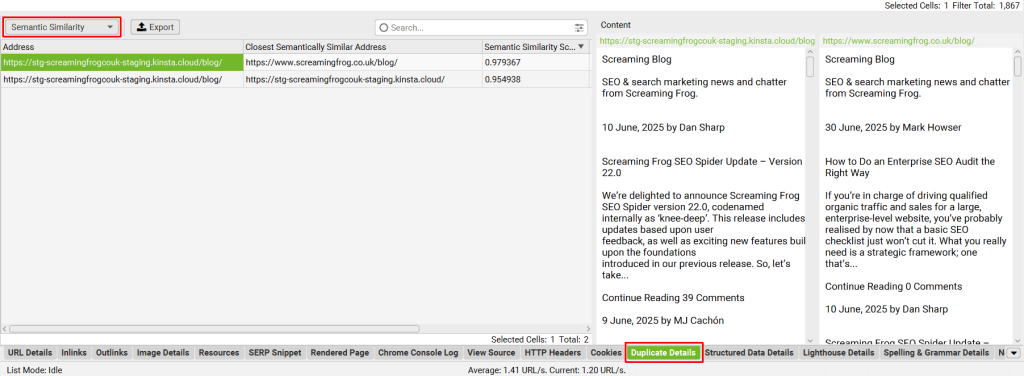

10) Use duplicates/alternatives

- The “No. Semantically Similar” column indicates how many alternatives exist.

- Always review the “second best matches” when in doubt or when the score is low.

- To prevent redirect loops, check that the matched page is not already present on the old domain.

Alternative options and tools

- Python scripts / Colab : to extract embeddings and match locally (see iPullRank and Chris Lever Colabs).

- Advanced fuzzy matching : Google Sheets, Rapid301, open-source apps.

- Redirect Mapper : tools like TF-IDF Matcher or the online app mentioned by Chris Lever.

Conclusions & Tips

Using embeddings for redirect mapping is a major advancement for automating large-scale SEO migrations.

Although imperfect, this technique combined with fuzzy matching and human review enables:

- Dramatically speed up the process,

- Reduce errors and soft 404s,

- Ensure lasting transfer of popularity from old URLs.

Key takeaway: Best practices combine expert judgment, data enrichment from other fields, and hybrid tools to save time and improve accuracy.

Would you like to entrust your site's SEO audit to experts?

- 1Website

- 2Number of URLs

- 3Your need

- 4Your specific requirements

- 5Your contact details

- 6Estimate

Abondance's expertise is:

2,000+ audits Delivered for SMEs, mid-sized companies and large enterprises.

2,000+ audits Delivered for SMEs, mid-sized companies and large enterprises. 15+ years of experience Senior consultants & Abondance ambassadors.

15+ years of experience Senior consultants & Abondance ambassadors. Proven method Prioritized roadmap, concrete actions.

Proven method Prioritized roadmap, concrete actions.The article “Create a redirect plan with Screaming Frog and vector embeddings” was published on the site Abundance.