Google does not read your pages in full to generate its AI responses, even if they are 3,000 words. The work by Dan Petrovic show the existence of a grounding budget of about 2,000 words per query, distributed among only a few sources, with a clear advantage for the highest-ranked pages. This changes everything for content strategy: information density matters more than length.

Key takeaways:

- Google has a grounding budget of about 2,000 words per query, shared among 3 to 5 main sources.

- Position in the ranking determines the share of this budget: source #1 receives about twice as much text as #5.

- At the page level, selection caps at around 540 words; beyond 1,500–2,000 words, gains are marginal.

- Concise, highly targeted pages achieve a much higher coverage rate than 4,000-word slabs: density > length.

Where do the numbers come from?

Dan Petrovic analyzed a large corpus of real queries to understand how Google feeds its Gemini systems with content from the web. The study covers 7,060 queries with at least three sources, comparing the actual grounding snippets sent to the model with the full content of 2,275 tokenized pages.

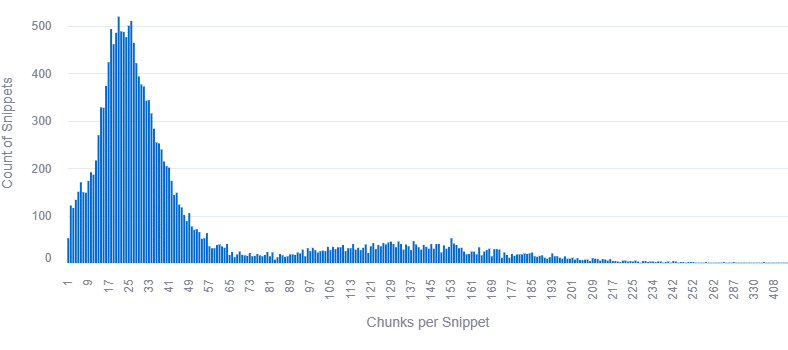

The dataset includes 883,262 snippets, with an average of 15.5 words per chunk, and a detailed statistic of chunk lengths (words, characters, words per chunk). To observe grounding, Dan does not try to "guess" the snippets: he looks at the exact segments provided to the model as context before generation, allowing a precise match with the source text.

The grounding budget: about 2,000 words per query

First major finding: each query appears to have a total grounding budget of about 2,000 words, regardless of the length of the source pages. The percentiles of total words per query are therefore: p25 = 1,546, median = 1,929, p75 = 2,325, p95 = 2,798.

This budget is remarkably stable Adding more sources does not multiply the total volume of text sent to the AI; it is simply redistributed among more documents. Dan specifies that these 2,000 words correspond to a median, with some extreme cases reaching about 5,000 words and a sample around 30,000 words that he suspects is a bug in his pipeline.

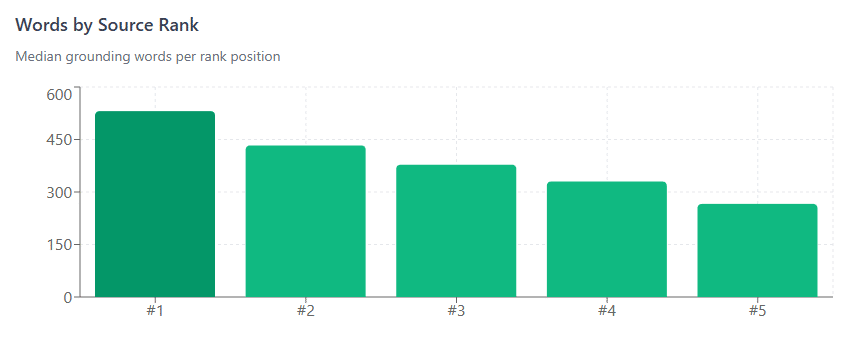

How does Google split this budget between sources?

The study shows that the determining variable is the source rank in the search results, not the length of the content. The overall budget is shared according to position in the SERPs, with the following median distribution:

- Rank #1 : 531 words, i.e. 28% of the total budget

- Rank #2 : 433 words, i.e. 23%

- Rank #3 : 378 words, i.e. 20%

- Rank #4 : 330 words, i.e. 17%

- Rank #5 : 266 words, i.e. 13%

Being #1 therefore gives you about twice as much grounding text as a page at #5: you are competing for a fixed share of a “cake” of 2,000 words, you are not making it bigger. In other words, even very long content won't get more space if its ranking is poor, while shorter content that ranks better will have more text selected.

What Google actually takes from a page

At the level of an individual page, the study looks at the distribution of words and characters actually selected for grounding.

The main percentiles are:

- Median : 377 words / 2,427 characters

- p75 : 491 words / 3,182 characters

- p90 : 605 words / 3,863 characters

- p95 : 648 words / 4,202 characters

- Max : 1,769 words / 11,541 characters

In practice, 77% of pages see between 200 and 600 words selected, the “typical page” sits at around 377 words used in the AI contextThere is a plateau around 540 words and 3,500 characters: beyond that, adding text does not really increase the volume actually considered by Gemini.

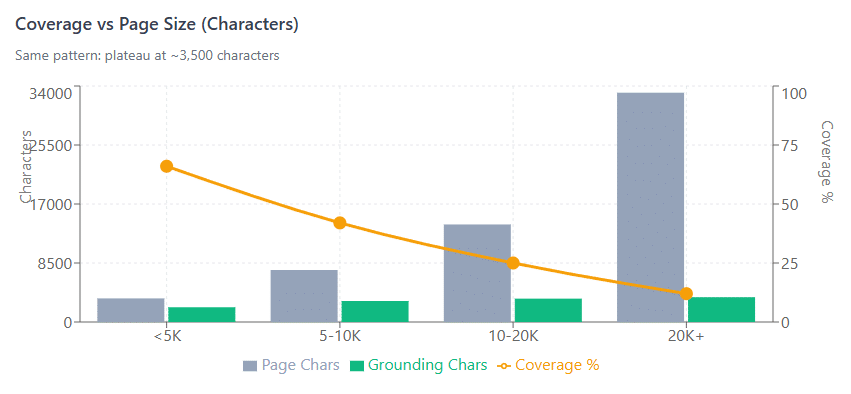

Coverage and page size: why long content runs out of steam

One of the most telling results is the drop in coverage (proportion of the page used) as page size increases. Dan compares page length (in words and characters) to the effective grounding volume, then computes a coverage rate.

In words:

- <1,000 words : 370 words taken on average, i.e. 61% coverage

- 1,000–2,000 words : 492 words, 35% coverage

- 2,000–3,000 words : 532 words, 22% coverage

- 3,000+ words : 544 words, 13% coverage

In characters:

- < 5,000 characters : 2,127 characters taken, 66% coverage

- 5,000–10,000 characters : 3,024 characters, 42%

- 10,000–20,000 characters : 3,363 characters, 25%

- 20,000+ characters : 3,574 characters, 12%

It is clear that the grounding volume levels off around 540 words / 3,500 characters, while page lengths continue to rise. This creates diminishing returns: beyond roughly 1,500–2,000 words, each additional paragraph reduces the proportion of your page actually visible to the AI, without increasing the absolute amount of text selected.

Methodological critiques and Dan Petrovic's responses

In a LinkedIn exchange, Rohit Singh raises several important critiques of the methodology: lack of dataset sharing, questions about query selection, the method for matching “grounding words” and the absence of statistical significance tests. He highlights four points: the choice of the 7,060 queries, the method for matching grounding excerpts to source text, lack of control for confounding variables (authority, freshness, structure), and the absence of confidence intervals for the so‑called 2,000‑word budget.

Dan responds point by point:

- The queries come from multiple clients in various sectors (health, travel, finance, marketing, sports, B2B, marketplace, gambling, etc.), generated from primary entities expanded into a large number of prompts, each triggering a grounding API call.

- The observed snippets are exactly those provided to the model (an extractive approach, without fuzzy matching), but he acknowledges not having controlled for confounding variables or performed advanced statistical analyses on the budget, noting that ~2,000 words corresponds to a median and that some outliers are probably bugs.

- Dan finally explains that he cannot publish the dataset for two reasons: client data and a second reason he cannot disclose without revealing sensitive information about his setup. This means that, even if the results are technically sound and consistent with other AI SEO observations, they are not independently verifiable as is.

What this means for content strategy

The main implication is simple to state: in the context of AI Search, information density beats raw length.

Some practical consequences:

- A highly targeted page of 800–1,500 words optimized for a specific intent is better than a 4,000-word block of text of which only 10–15% will be used.

- The goal is not to be "the longest", but to be the most relevant, best-structured source, and compact enough that the essential passages can be easily selected.

- The battle is over ranking: being among the very top results gives you a significant share of the grounding budget, which increases visibility in AI answers.

- For a site aiming at AI Search, this forces you to structure content into dense, well-tagged information blocks with a high signal rate and little filler. The challenge is no longer just to attract the user but also to produce text segments that the engine can easily extract to feed its generative answers.

The article “Grounding Budget: How Google limits content size for AI Search” was published on the site Abondance.