Websites learned to speak to browsers, then to search engines. Cloudflare believes they now need to learn to speak to AI agents, and has launched a tool to help with that. An ambitious initiative, but one that raises as many questions as it solves.

Key takeaways:

- Cloudflare launches isitagentready.com, a free tool that assigns an optimization score to websites based on their compatibility with AI agents across four dimensions: discoverability, content, access control, and capabilities.

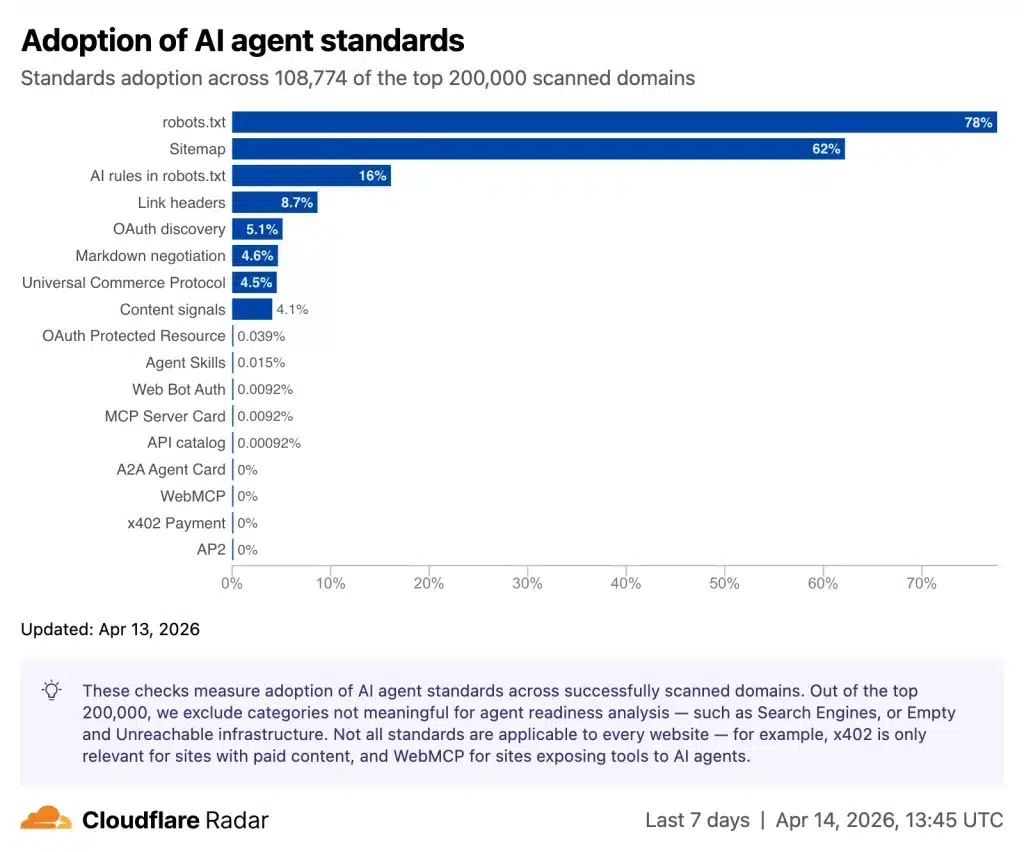

- The web is far from ready: only 4% of the 200,000 sites analyzed declare their usage preferences for AIs, and fewer than 15 sites have adopted the most recent standards like MCP Server Cards or API Catalogs.

- The initiative relies on an ecosystem of standards still under construction, exposing early adopters to risks of fragmentation or rapid obsolescence.

- Cloudflare is both the arbiter of the score and a provider of solutions to improve it, a position that merits questioning.

A scoring tool for a real problem

Cloudflare starts from a solid premise. When an AI agent like Claude, Cursor or OpenCode tries to access a website to read documentation, buy a product or interact with an API, it faces an infrastructure designed for humans: dense HTML, forms, browsing sessions, captchas. The result is slow agents, expensive in tokens, and often inaccurate.

To measure the scale of the problem, Cloudflare scanned the 200,000 most visited domains on the webfiltering out redirectors, advertising servers and tunneling services to focus on sites with which agents could reasonably interact.

The findings are striking: 78% of sites have a robots.txtbut almost all of them were written for traditional search engine crawlers, not for agents. Only 3.9% of sites serve content in Markdown when requested. And emerging standards like the MCP server cards are present on fewer than 15 sites across the entire dataset.

Revealed by Cloudflare, the isitagentready.com then offers a score structured around four axes.

- The discoverability (discoverability) checks for the presence and quality of the

robots.txt, of asitemap.xml, and Link Headers. - The content (content) assesses whether the site can serve a clean Markdown version on an agent's request.

- The access control (bot access control) checks whether the site expresses clear preferences about what AIs can do with its content.

- Finally, capabilities test for the presence of more advanced standards like MCP Server Cards, the API Catalog, or OAuth discovery so agents can authenticate properly.

Standards still in their infancy and almost no adoption

This is where Cloudflare's enthusiasm should be tempered. Several of the standards highlighted in this score are either still being drafted at the IETF or are informal proposals with no guarantee of widespread adoption. The API Catalog (RFC 9727), MCP Server Cards, and Web Bot Auth are recent standards some of which had not yet reached final RFC status at the time of publication.

This situation is not unique to Cloudflare: this is the reality of a web in transition. But it requires an honesty that the Cloudflare blog post tends to downplay. Adopting a standard today that will be revised or abandoned in eighteen months is potentially committing to integration work that will have to be redone. The big players, who have the resources to follow these changes, will benefit. Smaller teams or independent developers, less so.

The case of the llms.txt is illustrative. Proposed in September 2024, this standardized file for presenting a site to an LLM is not included by default in Cloudflare's score, only as an option. The reason? The standard is still debated. It is a cautious decision, but it signals that even Cloudflare does not yet know exactly which bets to place.

Negotiating content in Markdown: a real, measurable gain

One of the most concrete aspects of the initiative, and probably the most immediately useful, concerns the ability of a server to respond in Markdown when an agent sends a header Accept: text/markdown. Cloudflare claims to have measured up to an 80% reduction in the number of tokens needed to read a page, compared to its HTML version.

This figure needs to be put into context. The HTML of a technical documentation page is often very verbose: navigation, menus, scripts, nested tags… all of that is pure noise for an LLM. A well-structured Markdown file, It's the essence of the content without the packaging. The direct consequence is a reduction in API call costs for agents, reduced latency, and a better chance that the agent has the full context without truncation.

To illustrate, Cloudflare says it tested its own documentation site (developers.cloudflare.com) by pointing an agent (Kimi-k2.5 via OpenCode) at several technical sites. Result: 31% fewer tokens consumed and responses 66% faster than with other non-optimized sites. These figures should be taken with caution, as they come from internal, unaudited test conditions. But the order of magnitude is consistent with what we know about the structural overhead of HTML.

Technical implementation on Cloudflare Docs: pragmatic and reproducible

The most instructive part of the post may be the one that describes how Cloudflare reworked its own documentation. The approach is interesting because it circumvents a real problem: in February 2026, only three tools out of seven tested (Claude Code, OpenCode and Cursor) automatically send the header Accept: text/markdownFor the others, an alternative is needed.

The chosen solution combines two Cloudflare rules:

- A URL rewrite that transforms a request to

/r2/get-started/index.mdinto a request to/r2/get-started/, - And a header transformation that automatically adds

Accept: text/markdownto those rewritten requests.

Result : any agent can access the Markdown version of any page simply by adding /index.md to the URL, without having to manage a special header.

Another notable decision: rather than a single llms.txt giant file (Cloudflare's documentation contains more than 5,000 pages), each top-level directory has its own file, and the root file points to those subdirectories. This avoids the "grep loop" described in the article: an agent faced with a file too long to fit in its context window starts searching by keywords, loses the overall view, multiplies calls and degrades the quality of its responses.

Granularity was also carefully addressed : about 450 pages that are only lists of links (directory pages) were excluded from the llms.txt, since they provide no semantic value to an LLM whose child pages are already listed individually.

A player that rates and sells score preparation

Cloudflare's position deserves close scrutiny. The company publishes the reference score for "agent readiness", integrates this score into its URL Scanner, offers ready-made prompts to fix each failure point... and sells the products (Workers, Rules, Access) that allow those fixes to be implemented. The isitagentready.com itself is served by Cloudflare and exposes an MCP server.

This is not necessarily problematic: Google did the same with Lighthouse and the Core Web Vitals, becoming both judge of performance and provider of tools to improve it (via Google Cloud, Firebase, etc.). But this means the score's criteria can shift according to the company's commercial interests as much as agents' real needs. A standard promoted by a single company, even with good intentions, remains a standard whose direction can be influenced.

It is also notable that Cloudflare is actively pushing agentic payment standards (x402, Universal Commerce Protocol), some of which involve direct partners like Coinbase. These standards are not yet included in the score, but their presence in the tool already signals a direction.

What developers can concretely do today

Despite these caveats, several actions have a clear and immediate return on investment, independent of how standards evolve:

- Serving Markdown on demand is technically simple, reduces costs for API consumers, and improves the quality of agents' responses. It's the priority.

- Care for the

robots.txtfor AI agents (by adding directives for crawlers likeGPTBot,ClaudeBot,CCBot, etc.) is good hygiene that costs nothing and clarifies access rights. - Structure a

llms.txtby section for sites with a lot of content is a good documentation practice that benefits both agents and humans trying to quickly understand a site's architecture.

By contrast, implementing MCP Server Cards or API Catalogs for a site that does not yet have a public API or a clearly defined agent use case would be like building a waiting room before you have any visitors.

Adoption as a market indicator, not an obligation

The real value of Cloudflare's initiative may lie in the Radar dataset it introduces: a weekly tracking of the adoption of each standard by the 200,000 most visited sites, segmented by domain category. This kind of data will show whether the standards are actually "winning", or whether most sites remain passive while agents adapt to them, as they have already adapted to HTML over the past thirty years.

The answer to this question will reveal a lot about the power dynamics between site publishers and agent developers. If the most popular agents end up integrating HTML parsing capabilities that are robust enough, pressure on sites to adapt will lessen. If instead the costs and delays tied to consuming unoptimized HTML become a measurable competitive advantage for sites that adapt, adoption will naturally follow.

The article “Cloudflare scores your site for the AI agent era with the Agent Readiness Score” was published on the site Abondance.