Sponsored article by Fasterize

While systems are evolving ever faster, SEO teams’ ability to test and deploy optimizations remains structurally slow. An SEO roadmap’s time to market can no longer be confined to 12 months: teams must also be able to act in real time.

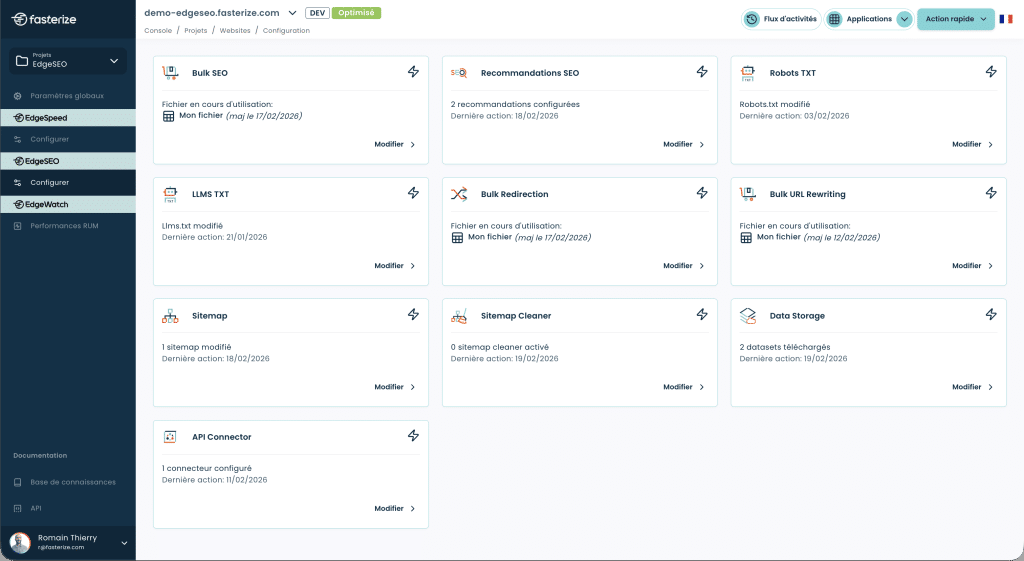

Fasterize gives SEO teams back control by allowing them to implement technical experiments easily and quickly, without constantly tying up development resources. This newfound agility is all the more important in an environment where AI answer engines (ChatGPT, Perplexity, Gemini) are changing the rules of online visibility.

LLMs.txt and best practices

The robots.txt file was long the universal standard for control crawler access. Today, a new convention is emerging: the /llms.txt and /llms-full.txt files, designed specifically to guide AI agents. In theory, these files make it possible to define clear rules about which parts of a site are accessible to LLMs, to grant or deny permission to use content, and to communicate information about content usage rights.

The solution EdgeSEO from Fasterize provides the ability to serve these files dynamically based on the detected User-Agent, allowing more granular management of access depending on the bot querying the site. This approach avoids creating multiple static files and makes it possible to adapt rules in real time according to the evolving practices of different LLMs.

Note : at this stage, feedback indicates that LLMs still pay little attention to this type of file. Google has specifically stated that it does not rely on the llms.txt file, and other models’ behavior may vary depending on the usage contexts.

Today, the robots.txt file remains the most widely recognized standard for guiding content crawling. That said, as with any declarative mechanism, it does not constitute an absolute guarantee regarding the potential use of content in third-party datasets (for example via sources like Common Crawl).

Implementing an llms.txt file is still largely an exploratory initiative today and poses no risk to your site. It belongs more to a test-and-learn approach than to a fully controlled strategy.

At this stage, it is mainly about experimenting, observing potential signals sent to the models, and positioning oneself in anticipation of future changes rather than expecting an immediate or guaranteed impact.

Markdown to serve semantic density

AI agents are widely adopting it Markdown as the reference format, because it is lighter, more readable and faster for LLMs to process than sometimes overly verbose HTML. Developers use tools like Crawl4AI and Firecrawl to extract web content and feed it to LLMs, and models like ReaderLM-v2 are specifically trained for this transformation.

The principle is therefore to convert HTML to Markdown on the fly for AI agents only. This transformation removes technical “noise” (headers, footers, scripts, navigation elements) to present the LLM with pure, structured content that is cheaper in tokens. On an Amazon product page, switching from raw HTML to targeted Markdown can reduce the token volume from 896,000 to under 8,000, a 99% saving !

Implementing this via EdgeSEO is simple: a rule can identify the AI bot by its User-Agent and dynamically adapt the response format to Markdown. The main advantage: you don’t need to maintain two separate versions of your pages. You edit your regular HTML page and it is automatically provided in Markdown to AI bots, avoiding any risk of duplicate content or desynchronization.

Injecting dynamic FAQs: natural language for RAG

The Question/Answer format has become the foundation of GEO because it aligns perfectly with the RAG (Retrieval-Augmented Generation) architecture used by LLMs. RAG systems retrieve relevant information chunks to feed the model’s context, and the FAQ format naturally structures information optimally for this process.

The method consists of inject contextualized FAQ blocks via EdgeSEO on strategic pages, notably high-traffic category pages. This dynamic injection enriches content without weighing down the typical user experience, adapting the page depending on whether the visitor is a human or an AI agent.

A point of caution to keep in mind: Don't inject for injection's sake. Each FAQ must address a real “zero-click” search intent, meaning it should provide a direct and complete answer to a question users actually ask. The goal is not to artificially inflate content, but to structure existing information to maximize the chances of being cited by LLMs in their generative responses.

Escaping the embedded widget trap: hard-coded review injection

LLMs often ignore the content of iframes and client-side JS widgets because these create a context isolated from the main DOM. When elements critical toE-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) such as Trustpilot customer reviews are written in JavaScript, they become invisible to the “eyes” of AI agents.

The solution here is to fetch reviews via API and inject them directly into the source code via Edge SEO before the page is served to the crawler. This server-side injection ensures the content is present in the initial HTML parsed by LLMs, without waiting for complex JavaScript rendering that some AI agents cannot execute.

The direct impact is a significant strengthening of E-E-A-T in the eyes of LLM recommendation algorithms. Customer reviews represent social proof and user expertise that models highly value in their citation and recommendation processes. By making this content directly accessible within the raw HTML, you mechanically increase your chances of appearing in generative responses.

Webperf & AI Crawl Budget: the impact of latency

The analysis of 2,138 websites cited by AI tools highlights a direct correlation between technical performance and visibility in generative answers. The Core Web Vitals, already important for traditional SEO, become doubly critical for GEO given that they affect the very ability of LLM-bots to extract and cite your content.

The data from the Salt Agency study show perfectly quantifiable correlations:

- Sites with a CLS ≤ 0.1 show a 29.8% higher inclusion rate in generative summaries compared to sites above this threshold.

- Pages with an LCP ≤ 2.5 seconds are 1.47 times more likely to appear in AI outputs than slower pages.

- Crawlers outright abandon 18% of pages whose HTML exceeds 1 MB, underscoring the need for lean markup.

- Finally, a Time to First Byte (TTFB) under 200 ms correlates with a 22% increase in citation density, particularly when paired with robust caching strategies.

This point is key: LLM-bots do not 'browse' like humans. They quickly assess the availability, readability and speed of access to the HTML. High latency mechanically reduces the number of pages crawled and therefore the likelihood of being cited.

This is precisely whereEdgeSpeed comes in: by drastically reducing TTFB and stabilizing response times, it increases the volume of pages that are actually usable by AI bots.

The overhead added by EdgeSpeed modifications remains marginal (10–15 ms on average) and is largely offset by the lighter HTML and optimized parsing on the LLM side.

Dynamic Rendering vs Cloaking: adapting format without deception

A site can show excellent Core Web Vitals and still remain invisible to LLMs. Why? Because its main content depends on JavaScript that AI bots cannot execute. This technical reality raises a legitimate question: how do you make your content accessible to AI agents without breaking search engine rules?

Good news: Google makes the distinction. The Mountain View giant does not consider dynamic rendering to be cloaking as long as the content served remains similar. The difference lies in intent: it's not about hiding different content, but adapting it to the client's technical capabilities. A 'data-heavy' version for bots maximizes accessible structured information, while a 'UX-heavy' version for humans favors the visual and interactive experience.

EdgeSEO enables precisely this approach by allowing detect the User Agent and automatically adapt the content delivery format. Concretely, you can expose a rich text version of information already available (for example by reinjecting into the HTML content initially loaded via embedded widgets or APIs), while preserving a rich experience for human users. This approach is especially relevant given that many LLMs (Claude and some versions of Perplexity) do not render JavaScript and behave more like scrapers that read raw HTML than like full browsers.

The golden rule to stay compliant : the content served to AI agents must be a faithful and complete representation of the information present in the user-facing version, simply optimized in its structure and presentation format. No hidden text, no extra keywords, no different information — only a technical adaptation to compensate for AI crawler limitations.Caution : Google no longer recommends dynamic rendering as a long-term solution since 2022, favoring Server-Side Rendering (SSR). However, in the specific context of GEO, where many LLM-bots still cannot execute JavaScript in 2026, EdgeSEO is a strategic lever to restore the most important content in the source code so that these bots can properly use it!

The article "GEO strategy: 5 EdgeSEO optimizations to tame answer engines" was published on the site Abondance.