After the launch of Mistral Vibe 2.0, the French startup Mistral AI unveiled Voxtral Transcribe 2, a family of two audio transcription models designed to meet business needs. The punchline of this offering? Performance comparable to industry giants like OpenAI, Google or Amazon, but at one-fifth the price. Available from today, these models fit into Mistral's expansion strategy in the voice AI market, a field until now dominated by American players.

Key takeaways:

- Mistral AI offers two transcription models: Voxtral Mini Transcribe V2 for batch processing and Voxtral Realtime for real-time transcription

- Both models support 13 languages with an error rate of around 4%, offering the best quality-price ratio on the market ($0.003/min for Mini and $0.006/min for Realtime)

- Voxtral Realtime provides configurable latency down to under 200 ms and can run locally on a smartphone or computer thanks to its 4 billion parameters

- Performance exceeds GPT-4o mini Transcribe and Gemini 2.5 Flash while being five times cheaper than competing solutions

Voxtral Mini Transcribe V2: power for high-volume workloads

The first model, Voxtral Mini Transcribe V2, positions itself as the ideal solution to transcribe large volumes of audio files in a single batch. It includes advanced features like speaker segmentation (diarization), contextual biasing, and precise word-level timestamps. Its impressive capacity: to process recordings up to 3 hours in a single request.

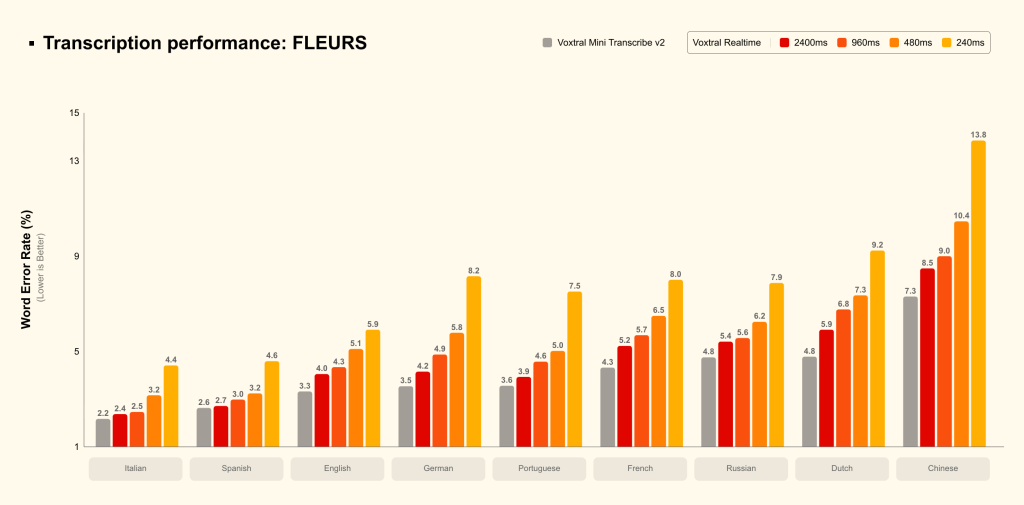

Compatible with 13 languages (English, Chinese, Hindi, Spanish, Arabic, French, Portuguese, Russian, German, Japanese, Korean, Italian and Dutch), this model displays a error rate of around 4%. In terms of speed, it processes audio about three times faster than ElevenLabs' Scribe v2, while offering equivalent quality. Mistral claims performance superior to GPT-4o mini Transcribe, Gemini 2.5 Flash, Assembly Universal and Deepgram Nova.

The price of $0.003 per minute makes Voxtral Mini Transcribe V2 the best value-for-money model on the market according to Mistral AI. For companies that need to process large batches of audio files daily (interviews, meetings, podcasts), this solution represents a cost-effective alternative without compromising quality.

Voxtral Realtime: instant transcription available locally

The second model, Voxtral Realtime, was specifically designed for live transcription. Its main advantage lies in its ultra-low latency, configurable down to under 200 ms, enabling real-time applications such as live captioning or conversational voice agents.

With only 4 billion parameters, Voxtral Realtime is compact enough to run locally on a smartphone or a computer, without a permanent cloud connection. This feature opens interesting possibilities for applications requiring data privacy and security. The model is also available as open weights under the Apache 2.0 license, allowing developers to freely integrate it into their projects.

Mistral's tests show that with a delay of 2.4 seconds (optimal for subtitling), Realtime matches the performance of its batch-processing model. Even with latency reduced to 480 ms, the error rate remains below 1–2%, ensuring accuracy nearly equivalent to deferred processing. This performance surpasses Google's solution, which shows latency of about 2 seconds.

Priced $0.006 per minute via API, Voxtral Realtime can also be tested for free in Mistral Studio or via the chatbot Le Chat, making it easier for developers to adopt.

A strategic positioning in voice AI

With this double announcement, Mistral AI proves its ability to compete with tech giants in segments previously dominated by Amazon, Google, Microsoft and OpenAI. The French approach stands out for its aggressive business model : comparable performance for one-fifth the cost.

The Parisian startup is multiplying strategic launches. A few days before this announcement, it unveiled Vibe 2.0, its optimized coding agent, confirming its desire to cover the entire value chain of generative AI. Support for 13 languages, including non-European ones like Chinese, Hindi, Arabic, Japanese and Korean, demonstrates global ambition.

To facilitate adoption, Mistral has put in place a audio testing area in Mistral AI Studio, allowing users to instantly test transcription capabilities with diarization and timestamps. This accessibility strategy, combined with the open-weights release of Voxtral Realtime, could accelerate the spread of these technologies across the French and European AI ecosystem.

The article "Mistral AI launches two high-performance speech transcription models at a fraction of competitors' costs" was published on the site Abondance.