Ranking well in Google's top 10 is no longer a guarantee of appearing in AI-generated answers. An Ahrefs study of 15,000 queries shows that ChatGPT, Gemini, Copilot and Perplexity mainly cite sources that are absent from the pages that rank best on Google or Bing. Result: more than 8 out of 10 citations point to content invisible in traditional SERPs. Why this gap? Because AIs don't “think” in terms of a single ranking; they multiply and merge query variants, returning the rules of SEO.

Key takeaways:

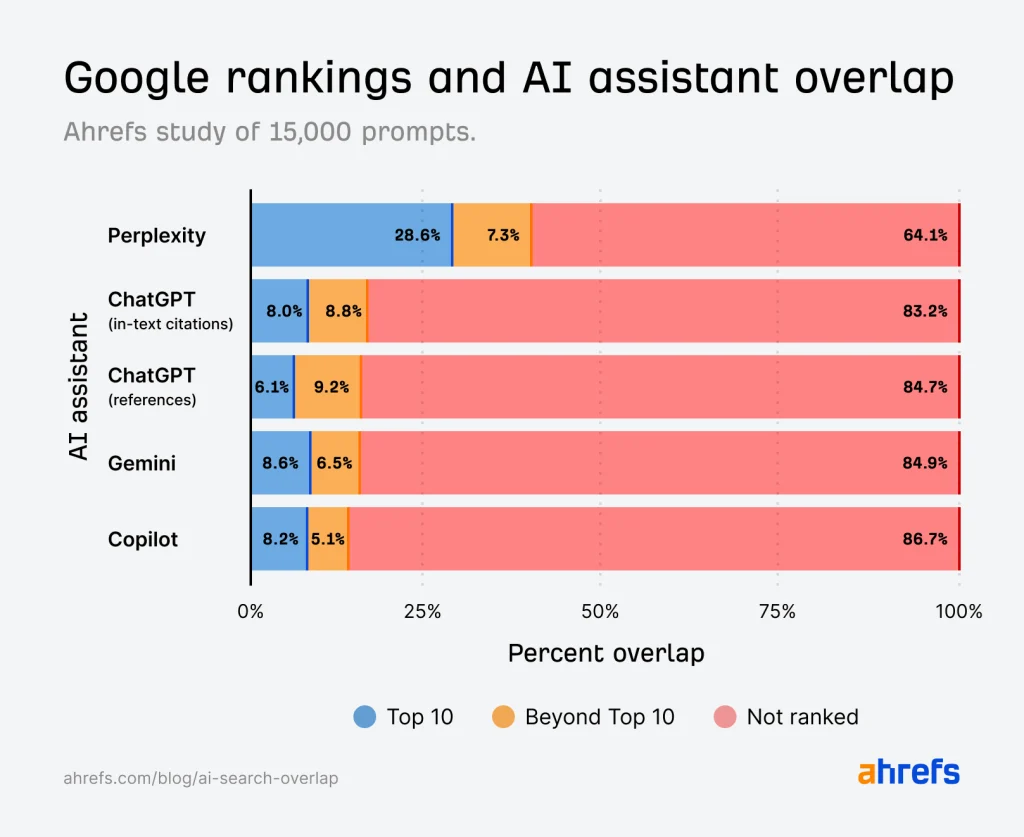

- Only 12% of the links cited by AI assistants appear in Google's top 10 for the original query.

- Perplexity is the AI assistant whose citations align most with Google (about 29% overlap), far ahead of ChatGPT and Gemini (~8%).

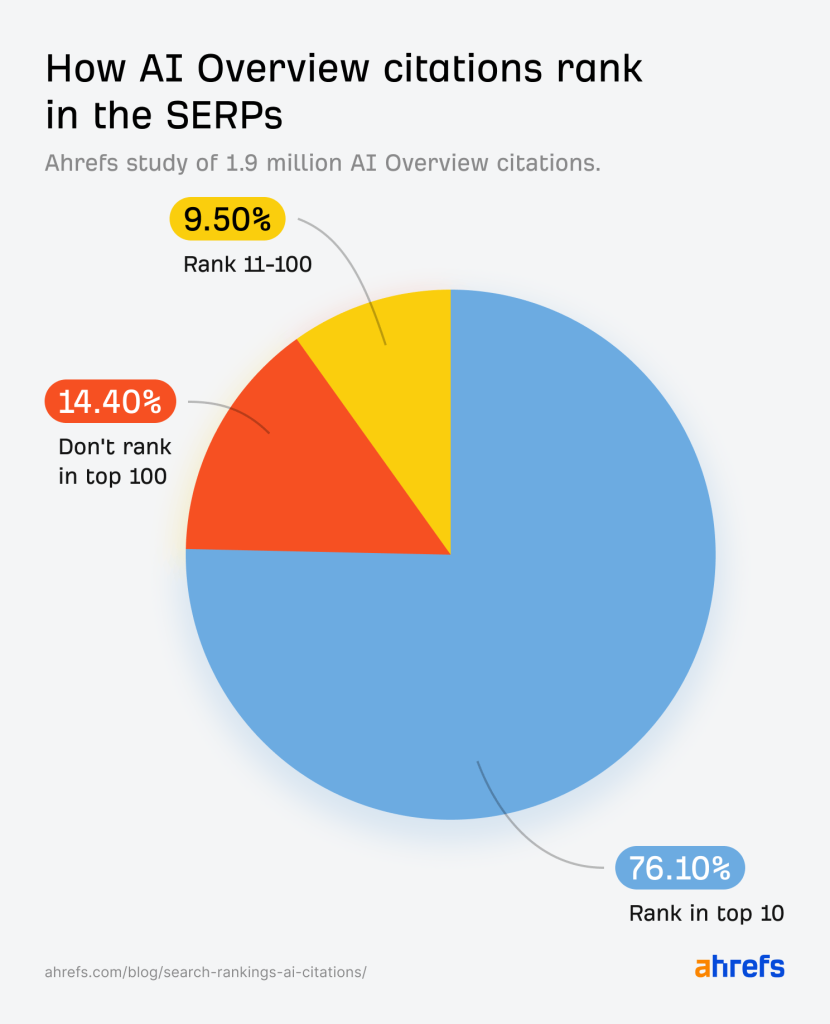

- Conversely, 76% of the links selected in Google AI Overviews come from pages ranked in Google's top 10.

- AIs and search engines do not use the same criteria or logic to select their sources, making optimization much more complex.

The (weak) links between AI citations and Google's top 10

The findings are clear: after analyzing 15,000 long‑tail queries and their citations by four major AI assistants, we found that only a minority of links (12%) cited appear in Google's top 10 for the same question. In short, more than 80% of the links cited by ChatGPT, Gemini or Copilot don't even appear among the top 100 Google results for those queries!

Perplexity nevertheless stands out from the rest : nearly one in three cited URLs actually points to a page ranked in Google's top 10, compared with fewer than one in 10 for the other assistants. Copilot, tied to Microsoft, logically performs better for Bing, but even there the overlap remains low (10%).

Google AI Overviews: an inverse logic

When it comes to AI Overviews, the logic is reversed: 76% of the cited links come from Google's top 10. These AI summaries therefore follow the approach of classic SERPs, whereas assistants like ChatGPT or Gemini depart from it significantly.

Being in Google's top 10 therefore remains (fortunately) decisive for appearing in Google's rich snippets. But there is no guarantee that this will be enough to appear in answers generated by conversational AIs.

Why such a gap? The query fan-out!

Generative AIs do not try to answer the exact query posed as a traditional search engine would: they develop “variants” of the question (“query fan‑out”) and merge the best results using a method similar to Reciprocal Rank Fusion (RRF). This logic can surface citations content that is not the leader for the primary keyword but ranks well for other close variants, often long‑tail.

Added to this is personalization : two users, starting from the same query, may see the AI draw on different sources depending on their history or conversational context. Thus, optimizing “only for the right keyword” is becoming less and less sufficient.

Perplexity, a special case: systematic citation

Unlike ChatGPT or Gemini, which vary their citation policy depending on the predictability of the question, Perplexity cites sources in almost all of its answersIf it more closely resembles Google results, it's because it was built to search and credit its sources live at each iteration, not relying on the Google index but on its own database via the “perplexitybot” crawler.

Being cited tomorrow: strategies and new habits

To hope to be selected by AIs, and thus gain visibility or recognition, you need to go further than simply ranking for a primary keyword:

- Optimize for clusters of long-tail queries, by analyzing “parent topics” and variants using dedicated tools like Ahrefs or Writesonic.

- Produce in-depth, contextualized content distributed across secondary pages, since 82% of AI Overview citations concern deep pages rather than the site's homepage.

- Work on domain authority that regularly appear in AI citations (Wikipedia, YouTube, Reddit dominate the top ranks, but specialized or peripheral sites also stand out depending on the assistants).

The article “Only 12% of links cited by the AI are also in Google's top 10” was published on the site Abondance.