ChatGPT, Perplexity and Gemini (Google) have become the new search reflexes for millions of users. And with them, a new discipline was born: GEO – Generative Engine Optimization. But what do brands really know about their visibility on these platforms? And how do LLMs (Large Language Models) choose the content or brands they cite in their answers?

To clarify things, Qwairy.co conducted the largest study ever on the subject: 32,961 queries analyzed, 3 AIs scrutinized, and nearly 60,000 sources collected. Objective: to understand what allows a brand to be cited or referenced in an AI-generated response.

We selected 9 key statistics, both surprising and strategic, to help you adapt your content strategy and position your brand.

To learn more about the context of the study and the retrieval of the responses, you can read Qwairy's article directly.

1. The average response length varies strangely between LLMs

First interesting statistic: comparing the responses between the LLMs.

- SearchGPT : 280 to 340 words per response

- Gemini : 490 to 590 words per response

- Sonar (Perplexity) : 340 to 410 words per response

Key takeaways Gemini gives much longer answers. If you want to appear there, it's better to produce more comprehensive content. On SearchGPT, by contrast, concision is key.

Key takeaways Gemini gives much longer answers. If you want to appear there, it's better to produce more comprehensive content. On SearchGPT, by contrast, concision is key.

2. Gemini cites more brands on average than its competitors

Among the three LLMs analyzed, Gemini is the one that mentions the most competitors in its answers.

Where SearchGPT remains very selective, Gemini does not hesitate to multiply references to similar brands, products, or services.

Key takeaways: In an LLM environment, being cited is to exist. And Gemini offers more exposure opportunities for brands, even those without national recognition.

Key takeaways: In an LLM environment, being cited is to exist. And Gemini offers more exposure opportunities for brands, even those without national recognition.

Strategic implication:

Strategic implication:

If you are a challenger brand, Gemini is probably your best entry point into LLMsThe logic is similar to an expansion of the SERP: where Google shows 10 results, Gemini can present 15 or 20 via its internal citations.

It's also an invitation to:

- work on your presence on the sources Gemini uses most (see Stat #8),

- and to structure your content so that it is easily reusable in comparisons, guides, or lists.

3. LLMs love structured lists with bullet points

99% of analyzed responses use lists with dashes "–" to present the elements.

99% of analyzed responses use lists with dashes "–" to present the elements.

It's massive. Almost all responses generated by ChatGPT, Perplexity, or Gemini include at least one structured list with line breaks as bullet points.

Key takeaways: LLMs value content readable, clear and visually structured. They seem to place particular importance on formats that facilitate summarization: lists, steps, comparisons.

Key takeaways: LLMs value content readable, clear and visually structured. They seem to place particular importance on formats that facilitate summarization: lists, steps, comparisons.

If your content consists of long, unstructured paragraphs, you are probably reducing your chances of being picked up by an AI. To adapt to this new standard, it is recommended to:

- Use lists with “–” or “•”

- Structure each idea or recommendation into one clear sentence

- Add frequent subheadings to make content scanning easier

In other words: write as if you were an AI yourself.

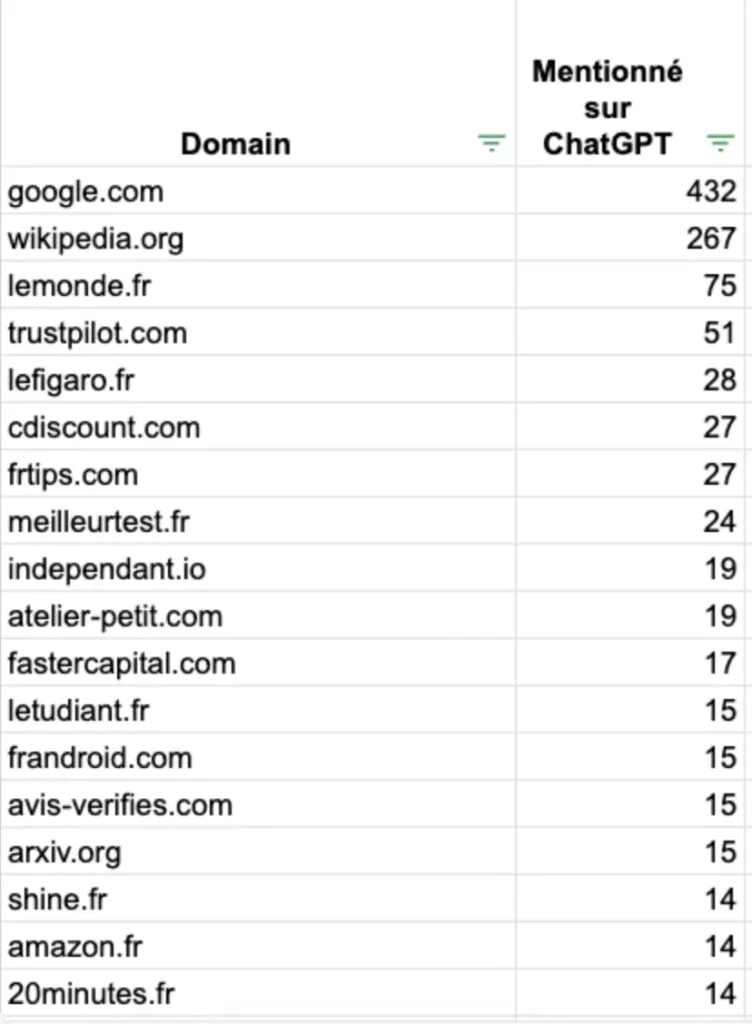

4. Wikipedia is a favored source for LLMs

Unsurprisingly, Wikipedia is the most frequently cited source in the responses generated by LLMs, particularly by SearchGPT and Perplexity. But other players also recur regularly in the analyzed corpora:

- Trustpilot and Avis Vérifiés for consumer reviews

- TheWorld.fr, TheFigaro.fr and TheStudent.fr for editorial and informational content

- Google Maps in some cases for local queries

Key takeaways: LLMs rely on sources perceived as reliable, editorial or community-based. They are not always 'big SEO sites', but rather platforms rich, coherent and well-structured.

Key takeaways: LLMs rely on sources perceived as reliable, editorial or community-based. They are not always 'big SEO sites', but rather platforms rich, coherent and well-structured.

If you want to increase your chances of being cited in LLM responses:

- Aim to be present on these platforms (e.g.: mention in a news article, company profile on Wikipedia, active Trustpilot page, Capterra page, G2 page for SaaS…)

This is a new netlinking logic, where the mention of the brand matters as much as the link to your site.

Here is the full breakdown of the main sources used in the study:

A small but important clarification here: lThe data comes from queries added on Qwairy. Some sites may appear more often than others if certain topics are more present on the tool.

A small but important clarification here: lThe data comes from queries added on Qwairy. Some sites may appear more often than others if certain topics are more present on the tool.

5. Call-to-action verbs appear in more than 25% of LLM responses

LLMs do not only inform, they encourage actionIn more than a quarter of the responses analyzed, verbs such as are found:

- Discover

- Choose

- Try

- Enjoy

- Go for

Key takeaways: The tone adopted by LLMs is persuasive but subtle.

Key takeaways: The tone adopted by LLMs is persuasive but subtle.

6. Visual formatting directly influences your presence in LLMs

The study reveals that:

- 99% of answers contain at least one bullet point element

- Most paragraphs are very short : between 1 and 3 sentences

Key takeaways: LLMs do not write like a 2010 blogger. They favor:

Key takeaways: LLMs do not write like a 2010 blogger. They favor:

- Blocks of text well-spaced

- A logical structure and easy to scan

- A direct, flowing tone without frills

Optimizing for GEO also means think editorial design :

- Avoid 10-line 'blocks' of text

- Increase line breaks

- Favor lists, numbered steps, and subheadings

In short, you should write as if your content were going to be copy-pasted into an AI response.

The advantage is that your content will become even more readable for the end user!

7. 59,992 sources were cited… but very few are used regularly

The Qwairy study identified 59,992 unique sources used in responses generated by LLMs.

But surprisingly: very few of these sources recur frequently.

Key takeaways: LLMs use a corpus extremely large, but they rarely rely on the same sites from one response to another (except for a few like Wikipedia or Trustpilot).

Key takeaways: LLMs use a corpus extremely large, but they rarely rely on the same sites from one response to another (except for a few like Wikipedia or Trustpilot).

Strategic implication:

Strategic implication:

This opens two major opportunities:

- You have a real chance of being citedeven if you're not an ultra-authoritative site.

- At the same time, focus on the 1% of sources that appear repeatedly (Wikipedia, national media, comparison sites) remain a powerful GEO tactic to increase your chances of appearing.

It's a twofold logic : aim for the top (highly cited sources), but also the niches (because the field isn't saturated).

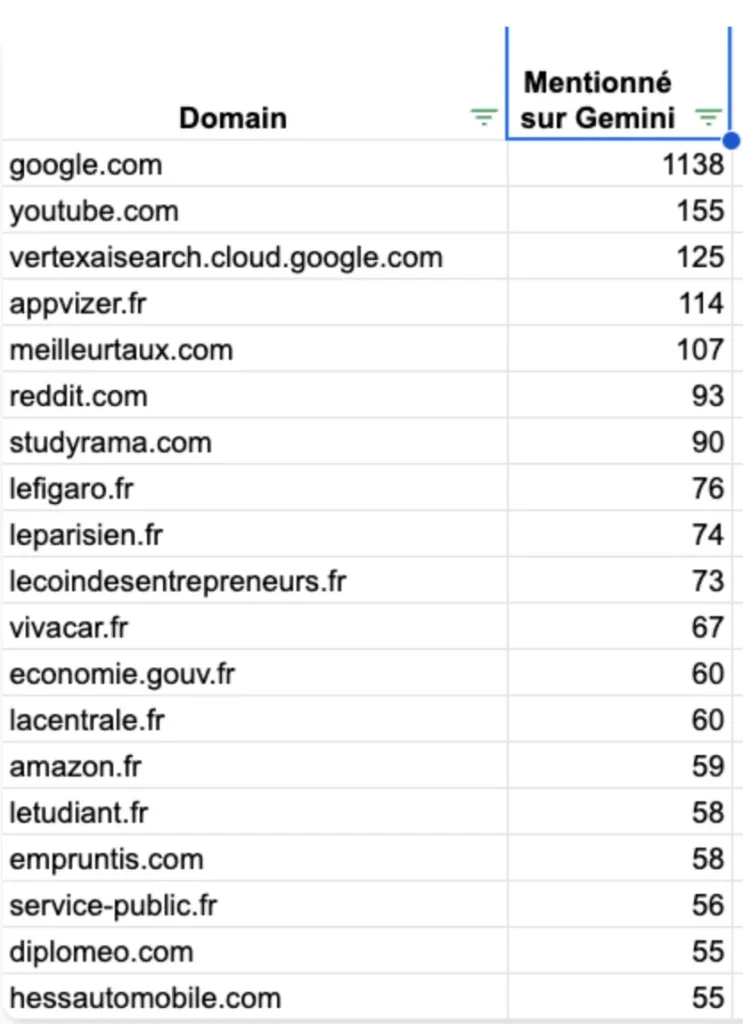

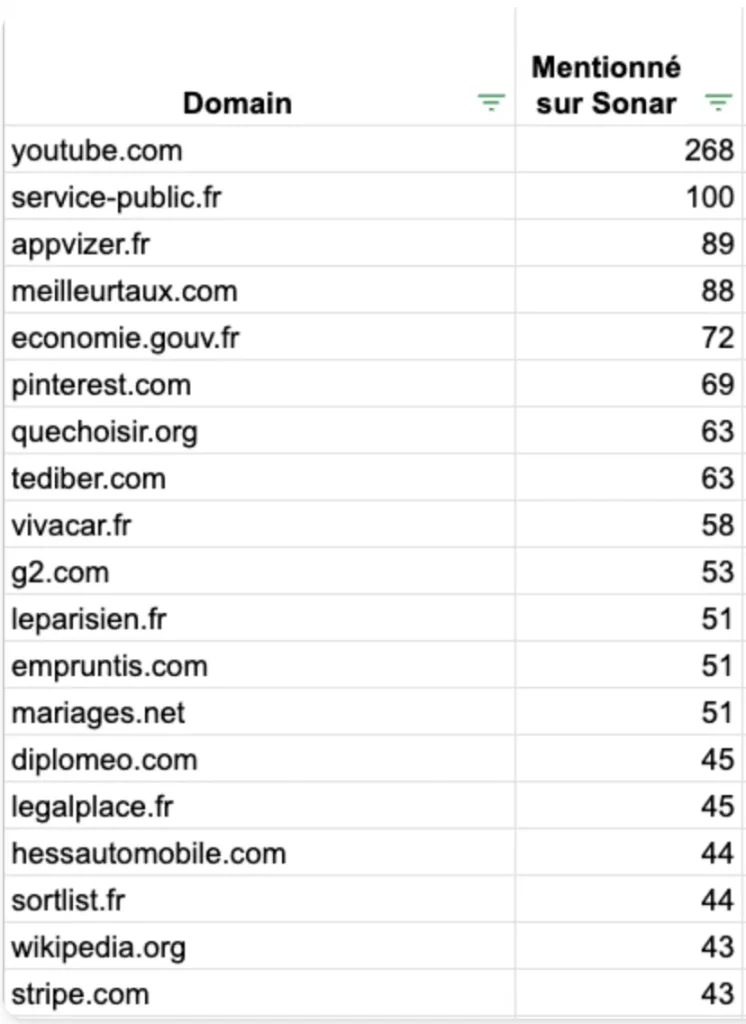

8. LLMs do not all rely on the same sources

Each LLM has its own sourcing instincts. The study reveals marked differences :

SearchGPT (ChatGPT)

SearchGPT (ChatGPT)

- Wikipedia

- Trustpilot

- TheWorld.fr

- Google Maps

Gemini (Google)

Gemini (Google)

- Google.com

- YouTube

- Public websites (e.g., service-public.fr, gouv.fr)

- Reddit, Studyrama, Lecoindesentrepreneurs

Perplexity (Sonar)

Perplexity (Sonar)

- YouTube is the most cited source

- Very large presence of blogs, forums, specialized comparison sites

Key takeaways: There is no 'universal reference' for LLM sources. Each model has its own biases and ecosystem.

Key takeaways: There is no 'universal reference' for LLM sources. Each model has its own biases and ecosystem.

- If your audience mainly uses ChatGPT: work on your presence on Wikipedia, French media, review sites.

- If you target Perplexity: think about YouTube video, technical content, Q&A format.

- For Gemini: be present on high-authority platforms and public websites.

To succeed with your GEO, you need to adapt your content and link-building strategy to each AI engine, as you would for Google vs Bing in traditional SEO.

To succeed with your GEO, you need to adapt your content and link-building strategy to each AI engine, as you would for Google vs Bing in traditional SEO.

9. URL slugs contain very specific (and revealing) keywords

9. URL slugs contain very specific (and revealing) keywords

The study highlighted a clear trend in the URLs used as sources by LLMs: slugs (the human-readable part of the URL) frequently contain certain keywords.

Here are some patterns observed:

- SearchGPT : "best", "top", "2023"

- Gemini : "guide", "how", "choose”

- Perplexity : "2025", "comparison", "price", "reviews"

Obviously, adding "2023" to a slug won't make you rank on ChatGPT. But it shows that ChatGPT can still rely on older URLs.

Similarly, the ubiquity of terms like "best" or "guide" also shows that these types of content should be considered in your content strategy to succeed in SEO in 2025.

The article “9 surprising statistics from 32,961 searches conducted on ChatGPT, Perplexity and Gemini” was published on the site Abundance.

9. URL slugs contain very specific (and revealing) keywords

9. URL slugs contain very specific (and revealing) keywords