In a single day, the Parisian startup unveiled three major announcements that confirm its ambition to become an essential building block of the global AI infrastructure. Here’s what it concretely changes.

Key takeaways:

- Mistral Small 4 unifies, for the first time, reasoning, multimodality and agentic code in a single model, under an Apache 2.0 license.

- Mistral joins NVIDIA's Nemotron coalition as a founding member, alongside Perplexity, Cursor and Black Forest Labs.

- Leanstral is the first open-source agent capable of generating formal code proofs for Lean 4, claiming a cost/performance ratio up to 15 times better than generalist competitors.

- These three announcements outline a strategic repositioning: Mistral no longer presents itself as a national alternative but as a leading global player.

Small 4: a model that replaces three deployments

Until now, technical teams wanting to use Mistral models had to choose between several specialized tools: Magistral for reasoning, Pixtral for image processing, Devstral for agentic code. Mistral Small 4 puts an end to that fragmentation.

The model is based on a Mixture of Experts (MoE) architecture: 119 billion parameters in total, but only 6 billion active per request. This design preserves a large overall capacity while limiting the computational cost of each inference. Compared to Mistral Small 3, the startup reports a 40% reduction in latency and three times more requests per second in a configuration optimized for throughput.

The context window reaches 256,000 tokens, which allows processing long documents without having to split them. The model accepts both text and images as input.

The most notable addition for professional use is the parameter reasoning_effortIt allows the user to choose between a fast, lightweight response equivalent to Small 3’s behavior, and an in-depth, step-by-step analysis comparable to the former Magistral models. One deployment therefore covers needs that previously required three.

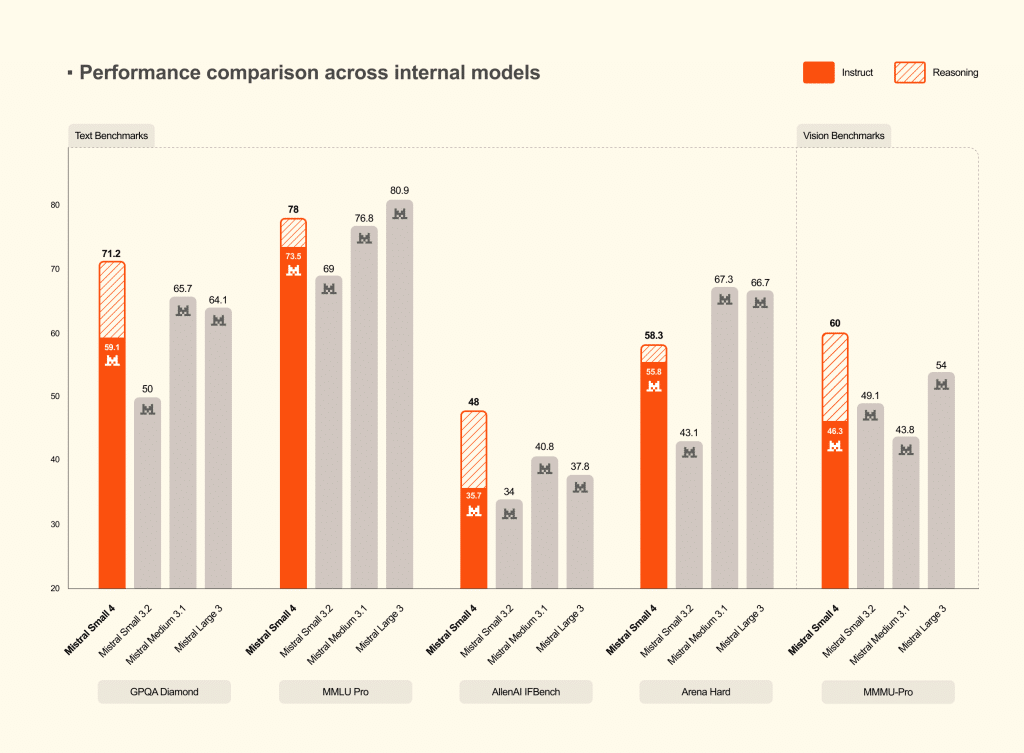

On benchmarks, Mistral claims that Small 4 with reasoning enabled equals or exceeds GPT-OSS 120B on three evaluated tests, while producing significantly shorter outputs. Shorter outputs mean less latency, lower inference costs, and a better user experience in production.

The model is available via the Mistral API, on Hugging Face, and as an NVIDIA NIM optimized container for on-premise deployments. It is released under the Apache 2.0 license and is compatible with common inference frameworks: vLLM, llama.cpp, SGLang and Transformers.

The Nemotron coalition: Mistral joins the big leagues

It's probably the most strategic announcement of the day. Mistral joins NVIDIA's Nemotron coalition as a founding member, alongside Cursor, Perplexity, Black Forest Labs and Mira Murati's lab.

The goal of this coalition is to co-develop an open-source base model, Nemotron 4, trained on NVIDIA's DGX cloud. The model will then be released as open source so the whole industry can specialize it. Each member brings complementary expertise: multilingual capabilities and model architecture for Mistral, research for Perplexity, orchestration for LangChain, multimodal expertise for Black Forest Labs.

For Mistral, this means accessing NVIDIA's computing infrastructure to train models at a scale it could not finance on its own. In return, the startup brings its training techniques, its multimodal capabilities and its enterprise-oriented fine-tuning tools.

The industrial logic is clear. Faced with the closed ecosystems of OpenAI and Google, this coalition structures the open-source camp into a coordinated force. For NVIDIA, a reference model optimized for its chips mechanically increases demand for hardware.

This alliance is not without tension. The DGX cloud on which Nemotron 4 will be trained is owned by NVIDIA. Mistral understands this well: the company is simultaneously investing in its own computing capacity, in France and in Sweden, so that this partnership remains a strategic choice and not a forced dependency. The question of technological sovereignty remains: Mistral strengthens a link with an American player subject to the extraterritorial regulations of the United States.

Leanstral: when AI proves it is right

The third announcement is the most technical. It addresses a limited audience today, but touches a fundamental problem of agentic AI.

The problem Leanstral seeks to solve

When an AI agent generates code today, the result is probabilistic. The code looks correct, it passes tests, but there is no absolute guarantee. Verification relies on humans, who proofread, test and correct. The faster agents produce, the more this human bottleneck becomes critical.

There is a solution to this problem: the formal proof. A proof assistant like Lean 4 allows a developer to write not only a program but also its mathematical proof. If the proof is valid, the code is guaranteed correct—not "probably correct," not "correct for the tested cases," but mathematically correct in the strict senseLean 4 is already used by mathematicians to formalize complex proofs and by engineers to certify critical software.

The problem is that writing proofs in Lean 4 is specialist work—slow and demanding. That is precisely the gap Leanstral aims to fill.

What Leanstral actually does

Leanstral is a 6-billion-parameter active AI agent, trained specifically to generate formal proofs in Lean 4. It doesn’t just produce code: it generates the proof that certifies that code. Lean 4 then checks that proof. If it is invalid, it is rejected. The verifier is incorruptible.

This is the first open-source agent of its kind for Lean 4. Existing systems are either wrappers around generalist models or limited to isolated mathematical problems. Leanstral is designed to operate in real formal repositories, with a sparse architecture and optimization specific to proof tasks.

Benchmarks and their limits

On FLTEval, their own evaluation suite, Leanstral achieves a score of 26.3 for $36 (pass@2), compared with 23.7 for $549 for Claude Sonnet 4.6. At pass@16, Leanstral reaches 31.9, beating Sonnet by 8 points. Claude Opus 4.6 remains the best in absolute quality with a score of 39.6, but at $1,650, which is 92 times more expensive.

These figures deserve a cautious interpretation. FLTEval is an in-house benchmark, not reproduced by third parties. The cost comparison pitting a specialized 6-billion-parameter active model against massive generalist models is not neutral: it’s a tool optimized for a specific task compared with Swiss-army knives. Leanstral’s ability to generalize beyond the FLT mathematical project on which it was evaluated remains to be demonstrated. Finally, all tests were conducted in the Mistral Vibe environment.

Why it matters beyond mathematics

The real issue is trust in autonomous agentss. Today, an AI agent writes code and acts on data without every line being verified. An agent capable of formally proving that its code does exactly what it was asked to do changes the game: the human specifies the expected outcome, the machine proves that it produced itSupervision shifts from line-by-line verification to the definition of specifications.

Leanstral is available immediately via Mistral Vibe (command /leanstall), via a free API (labs-leanstral-2603) and as a direct download of the weights under the Apache 2.0 license.

A deliberate strategic repositioning

Read together, these three announcements are not isolated product releases. They describe a trajectory.

- Small 4 It covers the enterprise market with a versatile, high-performing and cost-effective model.

- The Nemotron coalition gives Mistral a presence in shaping global standards for open-source AI.

- Leanstral demonstrates the depth of R&D that goes beyond chat models.

Mistral is targeting one billion euros in revenue this year, is building a data center in Sweden, signed with the Ministry of the Armed Forces in January and acquired Koyeb in February. All of this while maintaining a coherent open-source policy under the Apache 2.0 license. The remaining question is sustainability: how long can a hypergrowth company publish its models for free while financing the colossal infrastructure required to train them?

The article "Mistral hits hard: new model, alliance with NVIDIA and a formal-proof agent" was published on the site Abondance.