You just published a new article on your blog or a new page on your website, and you have made all the necessary optimizations to improve your organic search ranking.

But here's the thing: all those efforts will be invisible if Google doesn't index your content in its database!

How can you know whether your new site or content will take an hour or a week to appear in search results? How can you reduce the time between publishing and the indexing of your new URL?

We know that theindexing of content by Google can take anywhere from a few days to a few weeks.

Fortunately, there are simple steps you can take for more efficient indexing. We'll look at how to speed up the process below.

1. Request indexing from Google

The easiest way to get your site indexed is to request it via the Google Search Console.

To do this, go to the URL Inspection tool and paste the URL you want indexed into the search bar. Wait for Google to check the URL: if it isn't indexed, click the "Request indexing" button.

As mentioned in the introduction, if your site is new it won't be indexed overnight. Moreover, if your site is not properly configured to allow Googlebot it to be crawled, it may not be indexed at all. Let's continue!

2. Optimize the robots.txt file

The file Robots.txt is a file that Googlebot recognizes as an instruction about what it should or should not crawl. Bing and Yahoo also recognize Robots.txt files.

You can use Robots.txt files to help crawlers prioritize the most important pages so you don’t overload your site with requests.

Also check that the pages you want indexed are not marked as non-indexable. In fact, that’s our next item on the checklist.

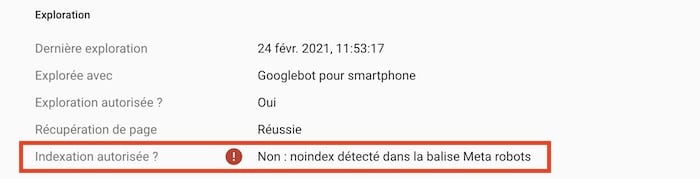

3. Noindex tags

If some of your pages aren’t indexed, it may be because they have "noindex" tags.

These tags tell search engines not to index the pages. Check for the presence of these two types of tags:

The Meta tags

You can check which pages on your site may have noindex tags by looking for "page noindex" warnings. If a page is marked noindex, remove that tag and submit the URL to Google so it can be indexed.

X-Robots tags

Using Google Search Console, you can see which pages have an "X-Robots" tag in their HTML header.

Use the URL Inspection tool: after entering a page, look for the answer to the question "Indexing allowed?".

If you see the words "No: 'noindex' detected in the 'X-Robots-Tag'", you know there’s something you need to remove.

4. Use a sitemap

Another fairly common technique for speeding up Google indexing of your content is to use a sitemap.

It is simply a file that gives Google information about the pages, images, or videos on your website. It also lets you indicate to the search engine the hierarchy between your pages, as well as the most recent updates to take into account.

Using a sitemap can prove particularly useful for Google indexing in the following cases:

- Your site hosts many pages and varied content: this ensures Google doesn't miss any.

- You haven't linked all your pages together: a sitemap lets you show Google the relationships between your different URLs and find any orphan pages.

- Your site is still too new to have backlinks or inbound external links: this lets you notify Google of the presence of your pages.

You can submit your sitemap in the Search Console Sitemaps tool and to signal its presence in your robots.txt file

5. Monitor canonical tags

The canonical tags tell crawlers whether a certain version of a page is preferred.

If a page does not have a canonical tag, Googlebot assumes it is the preferred page and that it is the only version of that page: it will therefore index that page.

But if a page has a canonical tag, Googlebot assumes there is another version of that page — and will not index the page it is on, even if that other version does not exist!

Use Google's URL Inspection tool to check for the presence of canonical tags.

6. Improve internal linking

The internal links They help crawlers find your web pages. Your sitemap presents all the content on your website, which lets you identify pages that are not linked.

- Unlinked pages, called "orphan pages", are rarely indexed.

- Remove internal links in nofollow. When Googlebot encounters nofollow tags, it signals to Google that it should remove the marked target link from its index.

- Add internal links on your best pages. Bots discover new content by crawling your website, and internal links speed up this process. Streamline indexing by using high-ranking pages to create internal links to your new pages.

7. External links

Google recognizes the importance of a page and grants it trust when it is recommended by authoritative sites. Backlinks from those sites tell Google that a page should be indexed.

8. Share on social networks

Google also monitors social networks, notably Twitter, for which it appears to be very quick.

Sharing your new content on these social networks helps bring Googlebot to your page quickly. The faster it is crawled, the sooner it will be indexed.

Need an SEO consultant to help you get your site indexed faster and improve your position in search engine results? Post a project for free on Codeur.com and get your first ten quotes within the hour!