We tend to believe that everything depends on the model. GPT, Gemini, Mistral, Claude, LLaMA… As if the magic came from them alone. What if that isn’t (entirely) the case? What if the real difference lies in how we speak to them? That’s the central idea of the document published by Lee Boonstra, tech lead at Google, who lays out a genuine method for conversing with an LLM. And spoiler: we’re far from the “magical” prompts you see on LinkedIn.

Key takeaways:

- An LLM's performance depends as much on its parameters as on the quality of the prompt provided.

- Settings such as the temperature, the top-K or the top-P strongly influence the coherence or creativity of the responses.

- Techniques such as the zero-shot, few-shot, or the Reasoning Chain allow more precise guidance of the AI's reasoning.

- Google now provides a real workflow to structure, test, and document prompts professionally.

- Advanced approaches such as Thought Tree or Internal Consistency pave the way for more complex and reliable uses.

Understanding the levers of LLM generation

Temperature: your creativity slider

Do you like stable, unsurprising answers? Or do you prefer a touch of madness in a model’s output? That’s where the "temperature" parameter comes into play.

The lower the value (around 0), the more the model becomes predictable, almost robotic. Useful for tasks like calculation, classification, or regulatory writing. Conversely, a temperature around 0.8–1 injects variety variety into the suggestions. Ideal for writing a poem, a catchy email, or generating multiple variants of meta descriptions.

No right or wrong setting, just a question of intent.

Top-K and top-P: how to limit (or expand) the realm of possibilities

These two parameters filter the model’s choices before it decides which word to write next.

- Top-K : the model chooses among the K most probable words. K=1? It always selects the most probable. K=40? It has more room to innovate.

- Top-P : here, we don't fix a number of words, but a cumulative probability threshold. P=0.9 means the model can only draw from a set of words whose probabilities total 90%.

These settings directly influence the tone of the responses. And their combination with temperature can create… magic or a talkative chaos.

Output length: the silent trap

A model doesn't "guess" when to stop. It's you who sets a maximum number of tokens to generate. Too short, and the answer is cut off. Too long, and the model can ramble or go off-topic. This point is crucial for techniques like ReAct or Chain of Thought, where every step of the reasoning matters.

We have the basics. Now, let's see how to use these levers through the most effective prompting techniques.

Mastering prompting techniques

Zero-shot: the direct approach, without a safety net

This is the raw format: an instruction, no example. Useful when the task is simple or standard. But be careful, the model can misinterpret.

A prompt like:

Classify this comment: "This movie is slow but touching."

…can produce random results. The model hesitates; it lacks reference points.

Few-shot: showing the way by example

You provide 2 or 3 similar well-crafted cases, and the model aligns with that pattern. This is particularly useful for standardized formats (JSON, tables, etc.) or precise business tasks. It's a bit like showing an example to a colleague before asking them to continue.

System, role and contextual prompting: framing the mission

- System-level Prompting : we set the rules. "You always respond in JSON", "You act as an SEO auditor", etc.

- Role prompting : we give the model a persona. A tour guide, a lawyer, a recruiter.

- Context-based prompting : we provide context in advance. "You write for a blog about retro games", for example.

These techniques give a framework, which allows the model to better understand what we expect from it. And above all, not to improvise unnecessarily.

Chain of Thought: force the model to reason

A simple instruction is enough: "Think step by step." And then, surprise: the model no longer just gives an answer, but unfolds a line of reasoning, a deduction. It's particularly effective for calculations, scenarios, or logic questions.

And that paves the way for even more advanced techniques.

Some concrete use cases to adapt to your projects

Are you doing content, development, data, or semantic analysis? Here are some realistic scenarios:

- Customer order parsing : transform "I want a large pizza, half mozzarella, half pepperoni" into a structured JSON object.

- Sentiment classification : tag customer reviews by their tone, even when ambivalent.

- Code review : ask the AI to explain a JS or Python function, line by line.

- Contextual translation : rephrase a title for YouTube, a SEO snippet or a marketing tagline.

Provided you have the right prompt, of course.

Advanced techniques for demanding prompts

Self-consistency: vote for the right answer

You run the same prompt several times with creative settings. Then you compare the responses... and keep the one that appears most often. It's an elegant way to stabilize a model's outputs.

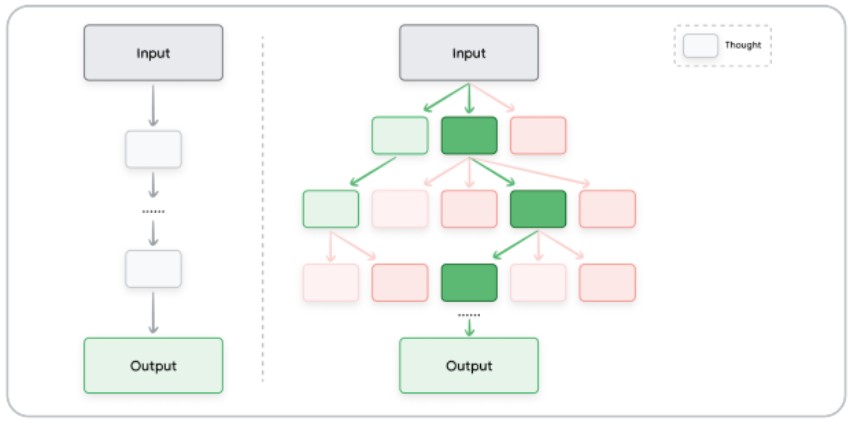

Tree of Thoughts: think like a tree

Instead of linear reasoning, the model explores multiple avenues in parallel, like a decision tree. This allows it to produce richer, more complex, sometimes unexpected... but relevant answers.

Automatic Prompt Design : AI that devises its own instructions

We ask the LLM to generate prompt variants for the same task, then we evaluate them automatically. It sounds meta, but it's hugely effective at large scale (chatbots, assistants, automation).

Structuring your prompts: best practices to adopt

It's not enough to have a good idea. You also need to express it well. Here are some simple but effective principles:

- Document your iterations : model version, temperature, exact prompt. Is it tedious? Yes. But it's like SEO: what you don't measure, you can't control.

- Be explicit : "Analyze the code below" is not enough. Specify what you expect: line-by-line explanation, error detection, rephrasing...

- Enforce a format : JSON, table, bullet points... Less room for interpretation, more control.

- Test as a team : everyone will have different intuitions. That's often where the best prompts emerge.

What if, in the end, the prompt were the new creative brief? The one that makes the difference between an LLM that truly helps you… and an LLM that just rambles on.

Mastering parameters such as temperature or the top-K, knowing how to balance zero-shot and few-shot, daring approaches like Chain of Thought or ReAct… none of this is improvised. But with a bit of method (and lots of testing), you can turn a simple language model into a real domain-specific co-pilot.

Are you already using these techniques in your projects? Have you found a format that works particularly well for generating content, structuring data, or automating certain SEO tasks?

Share your feedback in the comments — good ideas, tips, or things that went wrong, we'd love to hear about it.

The article “The art of prompting according to Lee Boonstra (Tech Lead at Google)” was published on the site Abondance.